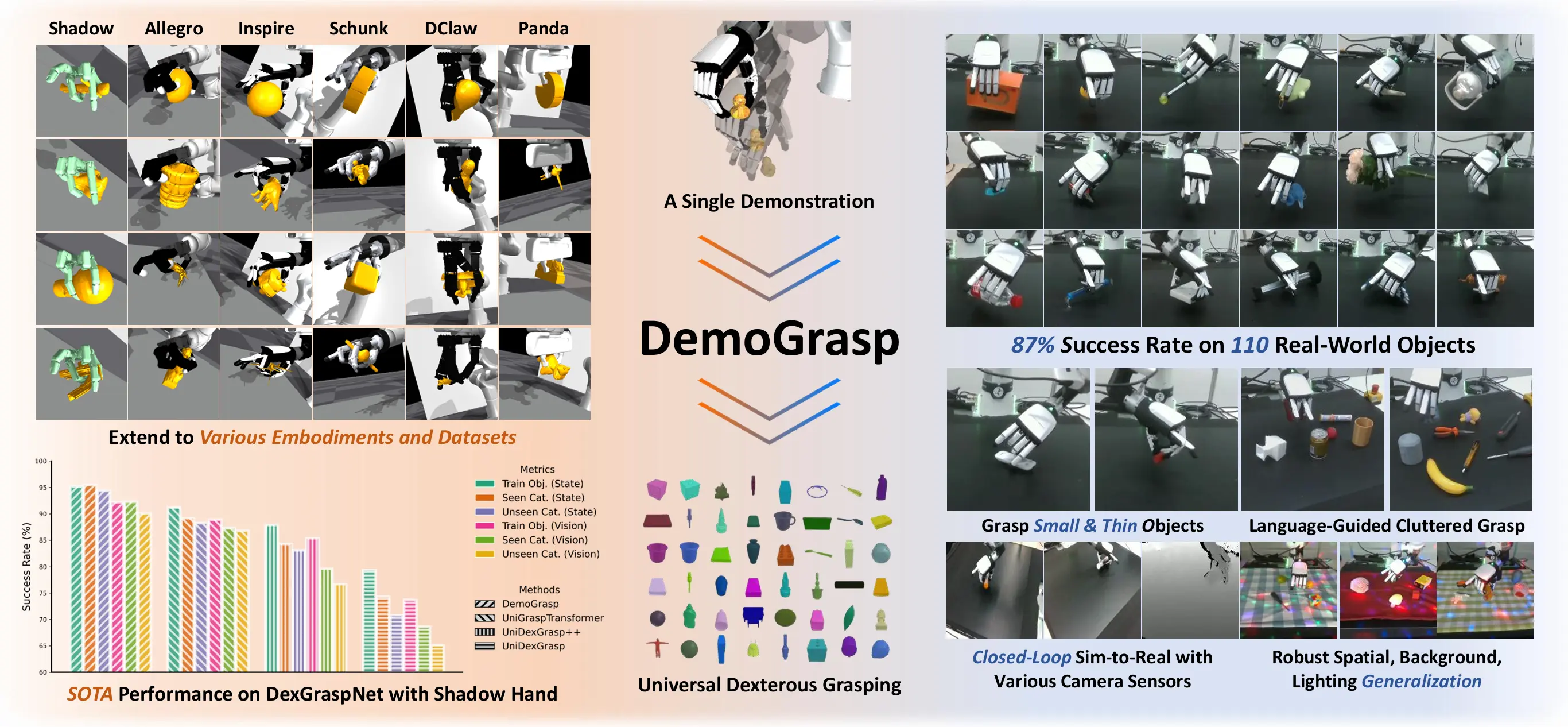

Universal grasping with multi-fingered dexterous hands is a fundamental challenge in robotic manipulation. We propose DemoGrasp, a simple yet effective method for learning universal dexterous grasping starting from a single successful demonstration.

Key Concept

While recent approaches successfully learn closed-loop grasping policies using reinforcement learning (RL), the inherent difficulty of high-dimensional, long-horizon exploration necessitates complex reward and curriculum design.

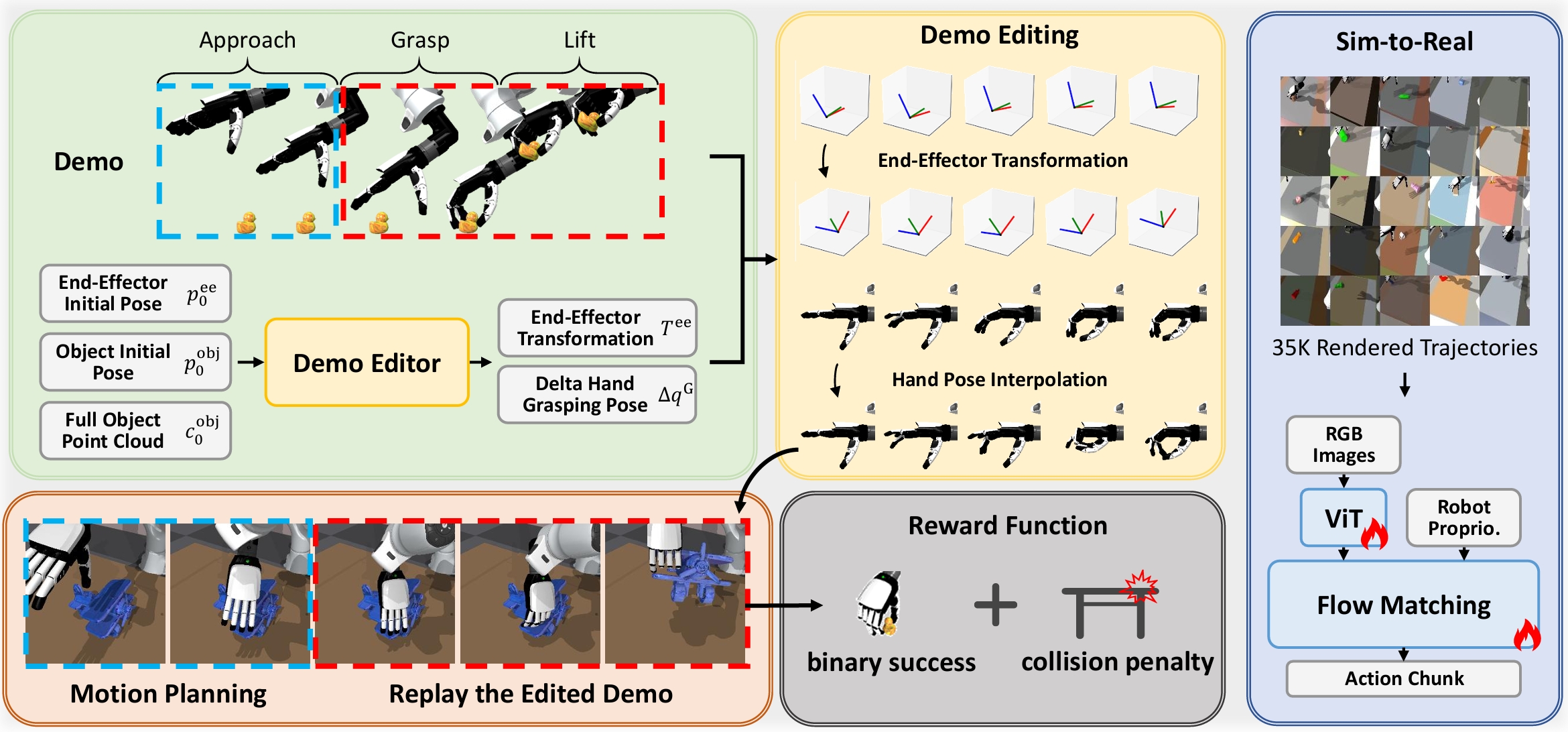

DemoGrasp adapts a single demonstration to novel objects and poses by editing the robot actions: changing the wrist pose determines where to grasp, and changing the hand joint angles determines how to grasp. We formulate this trajectory editing as a single-step Markov Decision Process (MDP) and use RL to optimize a universal policy across hundreds of objects in parallel in simulation.

Real-World Demo

Single Object

Cluttered Environment

Instruction Following

Pipeline

DemoGrasp uses a single demonstration trajectory to learn universal dexterous grasping, formulating each grasping trial as a demonstration-editing process.

For each trial, the Demo Editor policy takes observations at the first timestep and outputs an end-effector transformation and a delta hand pose. The actions in the demonstration are then transformed accordingly and applied in the simulator. The policy is trained using RL across diverse objects, optimizing a simple reward consisting of binary success and a collision penalty.

Experiments

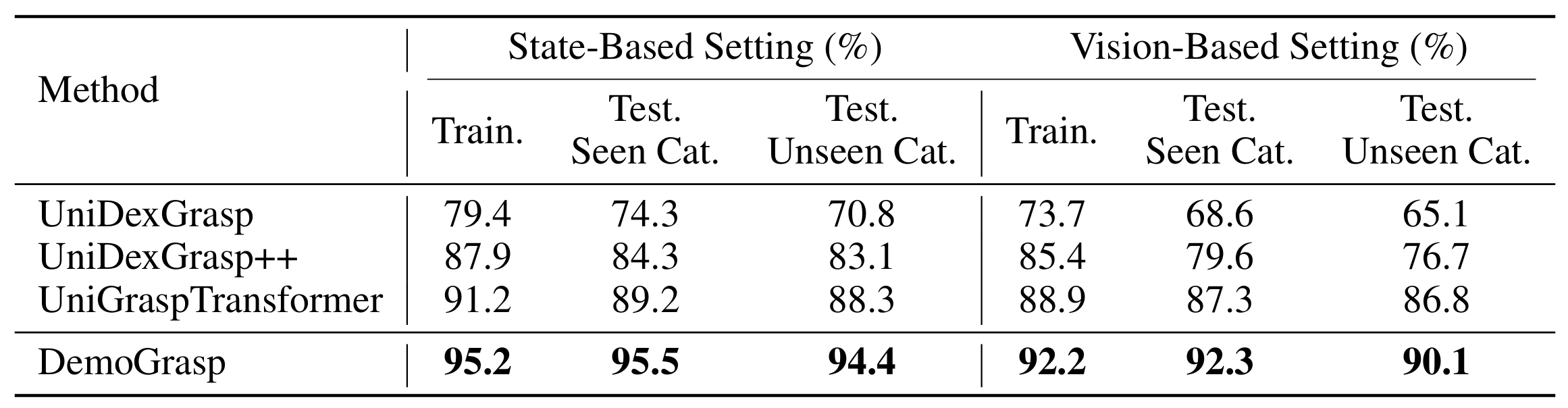

Simulation Results

DemoGrasp achieves state-of-the-art performance on DexGraspNet with ShadowHand. Results are reported for both state-based and vision-based settings on 3,200 training objects (Train.), 141 unseen objects from seen categories (Test Seen Cat.), and 100 unseen objects from unseen categories (Test Unseen Cat.).

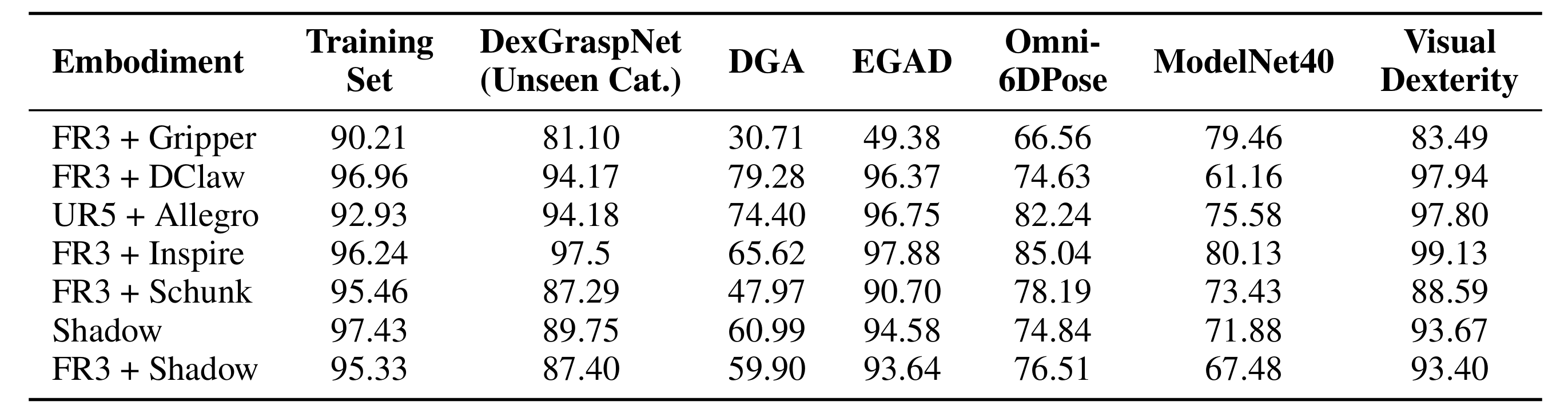

Embodiment Generalization

DemoGrasp is extensible to any robotic hand embodiment without hyperparameter tuning, achieving high zero-shot success rates on various object datasets while being trained on only 175 objects.

Real-World Results

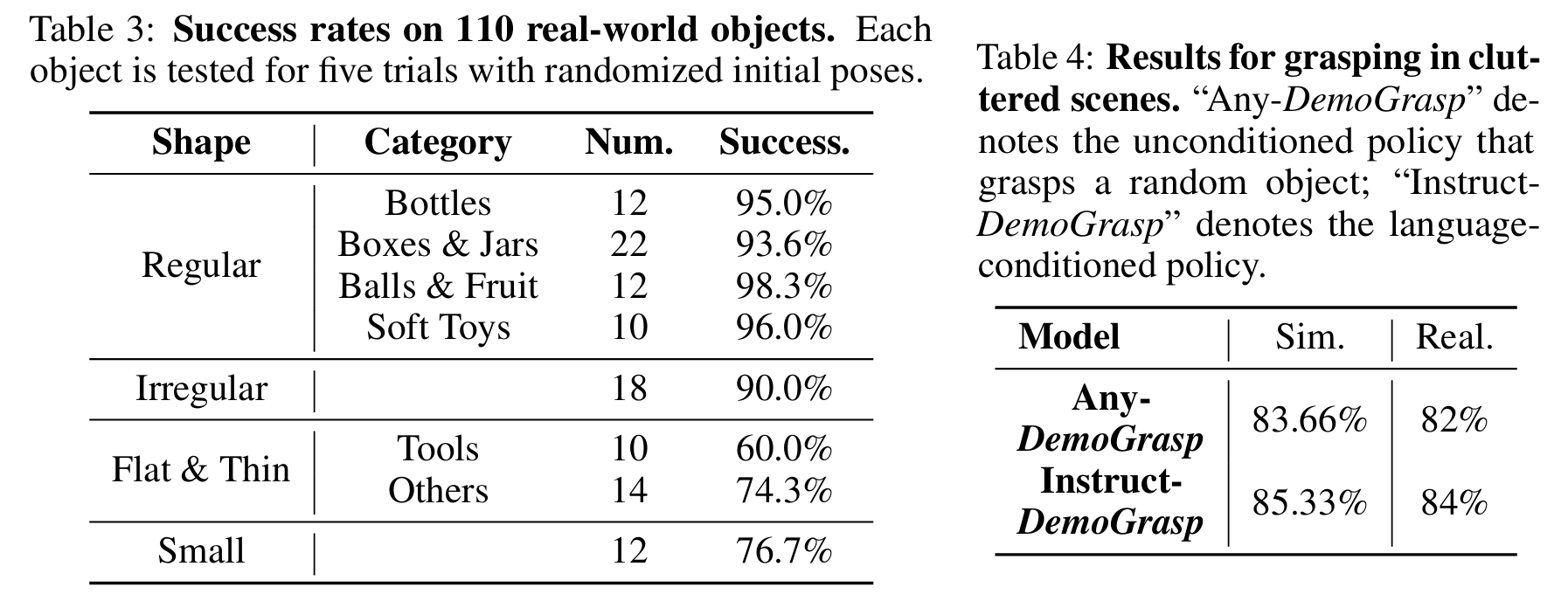

DemoGrasp successfully grasps 110 objects on a real robot, including small and thin objects. It is also extensible to real-world cluttered grasping and instruction-following, by including random distractor objects and automatically generated language descriptions during vision-based data collection in simulation.

Failure Cases

Citation

@article{yuan2025demograsp,

title={DemoGrasp: Universal Dexterous Grasping from a Single Demonstration},

author={Yuan, Haoqi and Huang, Ziye and Wang, Ye and Mao, Chuan and Xu, Chaoyi and Lu, Zongqing},

journal={arXiv preprint arXiv:2509.22149},

year={2025}

}