We present DemoHLM, a framework that enables generalizable humanoid loco-manipulation on real robots from only a single demonstration in simulation.

Key Concepts

DemoHLM introduces a hierarchical framework that integrates: a universal whole-body controller for omnidirectional humanoid mobility,

and manipulation policies trained via imitation learning from synthetic data.

Starting from a single VR teleoperated demonstration in simulation, DemoHLM generates diverse training data and learns manipulation policies that generalize across tasks and spatial variations. Real-world experiments on a Unitree G1 humanoid robot validate strong sim-to-real transfer and robust performance across ten loco-manipulation tasks.

Real-World Demo

Loco-Manipulation Tasks (third-person & ego-view, 1x speed)

Method Overview

Overview of DemoHLM. For each task, we collect a single demonstration via VR teleoperation in simulation and record the robot trajectory in the object frame. This trajectory is used to generate both pre-manipulation and manipulation phases. A manipulation policy is trained via imitation learning and deployed on a real humanoid robot with closed-loop visual feedback.

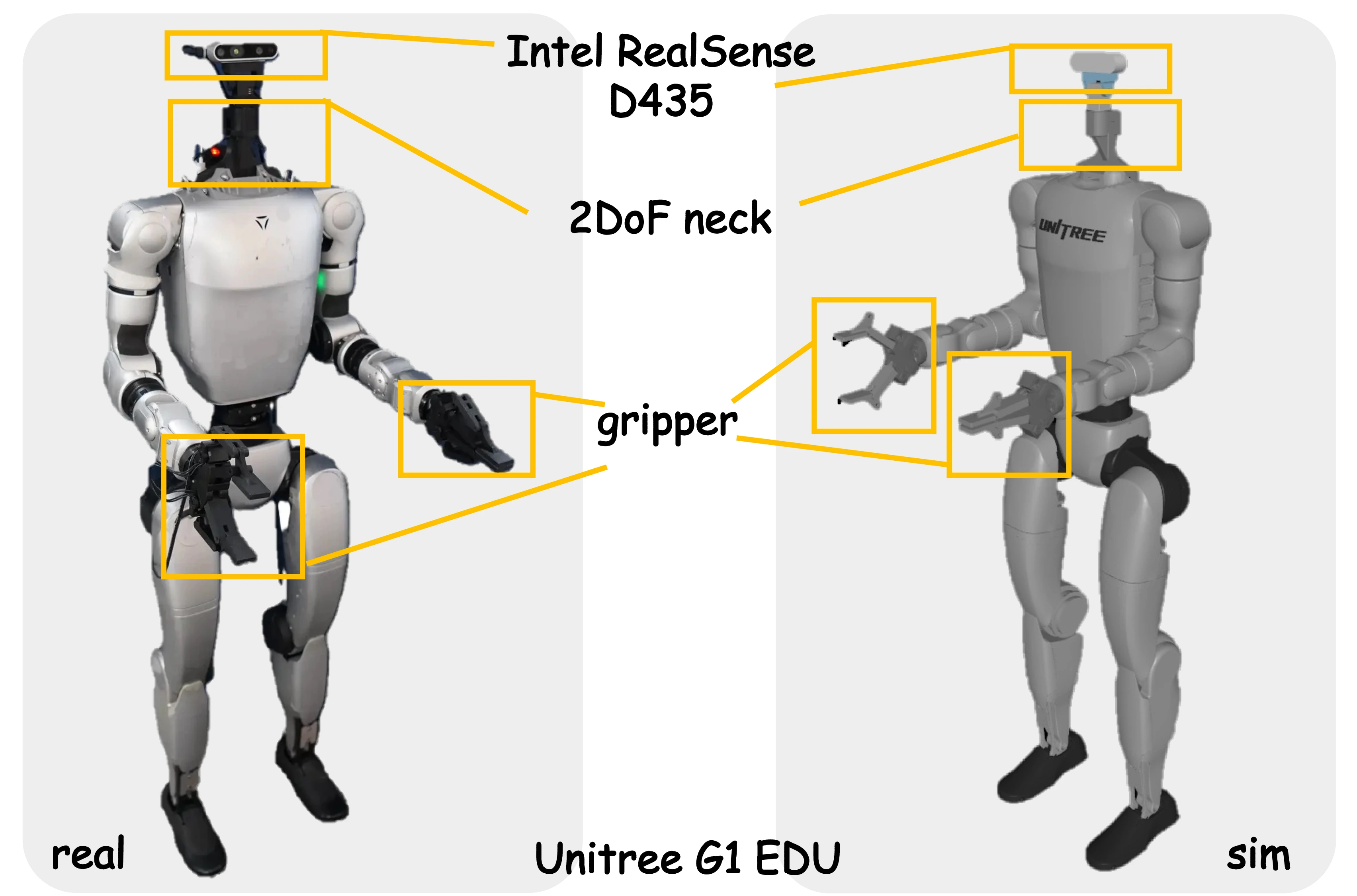

Hardware Design. We use a Unitree G1 humanoid robot in real-world experiments. To enable active vision, we mount a 2-DoF neck with an Intel RealSense D435 RGB-D camera. For tasks involving small objects, we attach parallel grippers to both end effectors.

Experiments

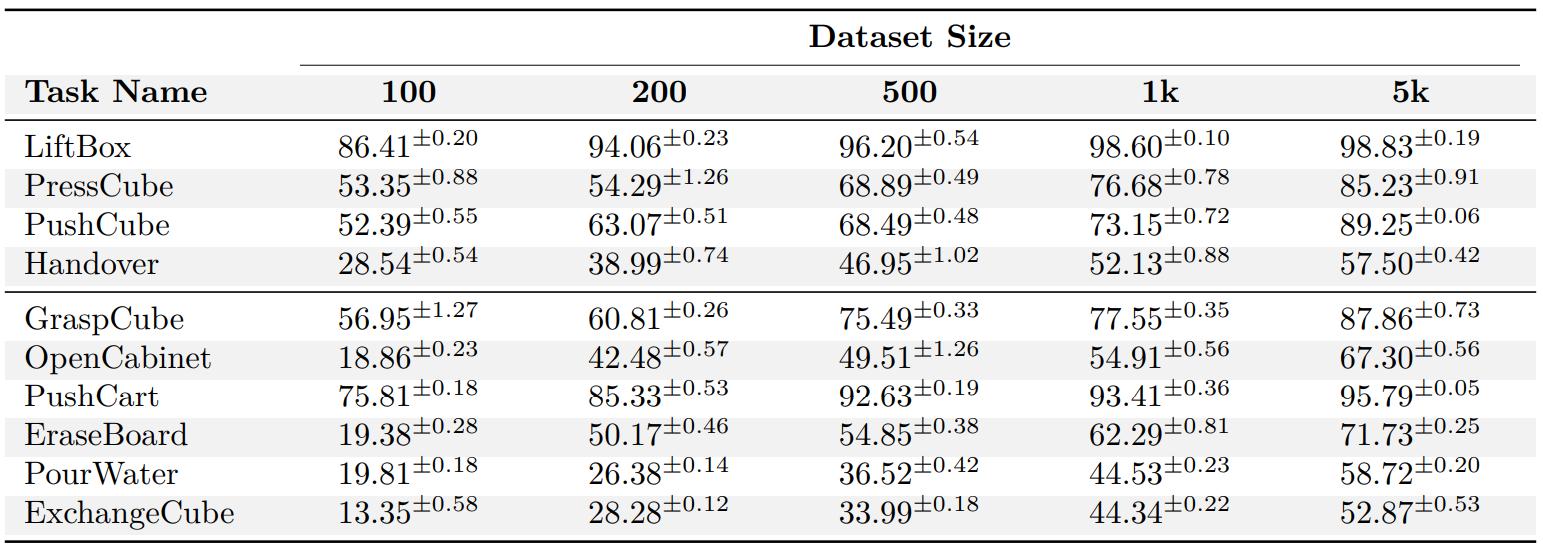

Scaling Performance

Success rates (%) of all tasks in simulation given different sizes of synthetic datasets.

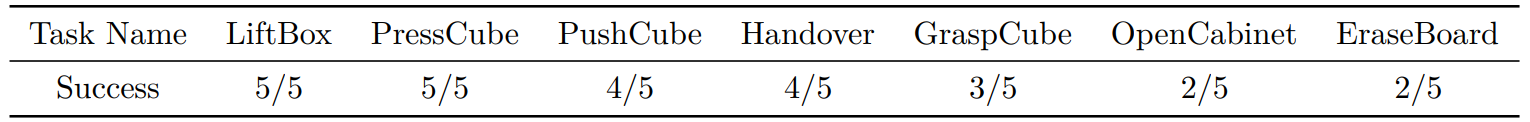

Real-World Experiments

Success rates of real-world test.

Real-world policy rollouts. Each pair of rows shows time-aligned first-person and third-person views. Frames progress from left to right over time, demonstrating robust performance under spatial variations.

Citation

@article{demohlm2025,

title={DemoHLM: From One Demonstration to Generalizable Humanoid Loco-Manipulation},

author={Fu, Yuhui and Xie, Feiyang and Xu, Chaoyi and Xiong, Jing and Yuan, Haoqi and Lu, Zongqing},

journal={arXiv preprint arXiv:2510.11258},

year={2025}

}